Edge Computing and the Future

Recent news about 5G have filled headlines lately, promising greater speeds and lower latencies over 4G LTE. Articles pointing to revolutionary examples like remote surgery, vehicle-to-vehicle communication, Internet-of-Things adoption, and real-time updates are all exciting advances.

But a big question many might have is how does 5G enable that? Besides using higher radio frequencies that enable higher and faster data transmission, or more towers and more backhauls, what’s the special sauce?

What is Edge Computing?

One of the key enabling ingredients is with “edge computing”. Edge computing is essentially bringing the computing resources closer to the devices needing it, such as mobile phones or vehicles.

The speed of light is still a limitation, the fastest data can go from Los Angeles to New York and back is 26 milliseconds, and over the general internet it’s at least 3 times slower. For faster round-trip times, keep it within Los Angeles. Edge is normally contrasted with a Central Cloud.

A sizeable portion of the Internet already operates with a similar model via the usage of Content Delivery Networks, CDNs, that mainly operate with content like images and videos. You may have heard of a few such as Cloudflare, AWS CloudFront, Akamai, etc. These bring the content closer to the users and help offload that traffic from the core servers. Most CDNs have a presence in almost every major city in the world, especially Los Angeles.

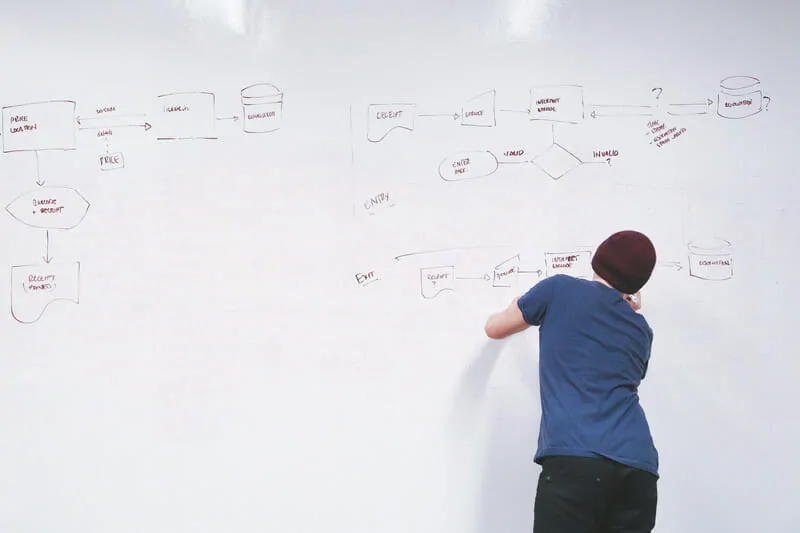

How Does Edge Computing Work?

The simple explanation of bringing the computing closer to the devices entails moving the servers closer. Unlike a CDN that operates with whole assets like images, servers and applications each have their own custom code and logic that needs to be executed to make decisions and process data.

An Edge Computing service provider would then operate as a more customizable CDN, by offering the ability to run an application’s custom code on their servers.

This area of Edge Computing service providers is still evolving. We’ve written before about some CDNs that already offer the ability to run custom code on their servers, nowadays called “serverless computing”, packaged in various technologies and uploaded to their platform. That may or may not be sufficient for latencies required in certain applications.

Old fashioned scale with plates and chains to place items in and compare.

Edge Computing vs Serverless

Serverless computing is the ability to run software code without needing to provision the underlying hardware the software runs on. There’re various approaches to that, with the most common being uploading the raw code.

Serverless is also an abstraction that allows it to be more easily operated in different environments. This extends to items like CDN servers, or a wireless carrier’s cell tower, if that ability is provided. They’re typically intended to be smaller pieces of software with short execution times, like the scope and duration of a website request.

In a general sense, serverless can be thought similar to edge computing if the serverless computation can be moved closer to the end device. As the ability to get closer to the end device is more common, the edge will also move where yesterday’s edge isn’t considered today’s edge.

Depending on what type of serverless execution model is allowed, it may not be superior to other edge computing methods, such as running a virtual machine allowing sustained execution and more efficiency executing within a single machine.

Insert image: With the idea of network connections

Connected servers line-up that are powered on.

Bringing the Edge even closer

For example, Augmented Reality (AR) that enhances a perspective with additional information or features like avatars for games, has a threshold of about 15ms before it feels unnatural. Here, the AR code could generate some of the scene from multiple nearby users that might be too much work for the AR headset. If the nearest CDN server is farther away or the network is too slow, that can be an issue.

With the changes in 5G and 4G LTE networks helping to enable Edge Computing, wireless carriers or other providers partnering with the carrier are opening their networks to bring computing even closer to mobile devices and possibly home internet connections. One such service is AWS Wavelength that’s bringing a subset of their cloud functionality closer to carriers like Vodafone.

Sometimes, a carrier might even run the computation right at the cell phone tower, for the least amount of latency short of devices or vehicles communicating directly with each other.

What are some more examples of Edge Computing?

A lot of examples on 5G and new areas of development give examples of what it can enable, but they don’t go into the details on how that’s enabled. In a lot of cases, edge computing is the enabler for some of those alternative possibilities.

Most web and software applications will benefit sufficiently from a CDN’s “serverless” edge platform rather than needing to be as close as possible to user devices. When latency is important, sometimes moving as close as possible can make an application feel even faster and more enjoyable to use. It can also provide some extra redundancy if there’s an interruption reaching the central cloud servers.

Remote Surgery

This has been a common example, but there probably isn’t much edge computing involved here. This is likely to depend on the network, such as staying within the carrier’s network and avoiding the general routed internet. One key enabler for this is the network slicing that 5G is going to provide for distinct classes of traffic priorities. Remote surgery would be the highest priority transmissions and have the least wireless interference.

Augmented Reality

Offloading some of the scene’s image recognition and generation computation to the edge node (eg, a server of some type) for low-powered AR headsets or to save on battery life when such an edge compute ability is available. The image recognition can help more quickly identify objects in the scene and look up their details, while the AR headset can do the less intensive tasks such as object tracking.

The edge computing could also verify data being received by a client much like an online video game does for any data or transmissions that doesn’t seem feasible, such as someone moving too quickly, or sending spam messages.

Internet of Things

This is probably one of those areas where edge computing acts more as a buffer and an additional layer of redundancy rather than reducing latency. The edge can store the data from the IoT sensor and if the central server is down, deliver at a later time. An edge could also receive data from many sensors, aggregate and process the data, before sending it to the central servers. That would help offload computations and minimize bandwidth.

There are some areas where running AI models at the edge would be useful, like an often-cited example of testing a jet engine sensor, but more specialized and specific setups would likely be a better choice.

Vehicle-to-Vehicle Communication

This is also going to depend on the network for low-latency communication, such as minimizing the latency of relaying the fact that an accident just happened. Some areas could be off-loaded to the edge, such as additional image processing to assist a vehicle authenticating and attesting the data so that verification secrets don’t reside in a vehicle’s computer where it might be hacked and stolen.

Artificial Intelligence for Traffic Patterns

It could make more sense to run and continually train an artificial intelligence network at the edge instead of from a centralized cloud location, since each area could have a unique pattern. The AI could control traffic lights for more optimized traffic flow, confirm and relay incidents, and also collect metrics such as number of cars that pass an intersection that could be stored in a central database.

The network could be duplicated and sent to the centralized cloud for backup and optimization/pruning. The periodic snapshots would reduce the bandwidth and computation needs. If the edge platform is unavailable, the AI network could run from the cloud servers instead.

Mobile and Geographic Reality-Tied Games

Similar to Augmented Reality offloading some aspects, edge computing may enable low latency for remote streaming of games that might be too resource-intensive for mobile devices. Games involving hundreds or thousands of active players actually or semi-virtually in the same grid area.

Within the same grid, players in the same area could exchange data within that edge network for minimizing latency. The edge could collect metrics and telemetry data to help with understanding how the game is being used for future optimizations or detecting cheating.

Another benefit can bring application-level multicast. Multicast would relay the same or modified data to many players. For example, a central game server decides that an area of a map grid with hundreds of players should receive a bonus. The game server would send one command to the edge node responsible for that grid, and it can handle modifying and sending it to each player instead of the central server having to maintain thousands of connections.

Building Your Own Edge Compute Network

There are stories of companies building out their own edge network at their places of businesses. Such as fast-food restaurants wanting to compute and aggregate data without needing to rely on a central server and internet connection that sometimes go down. Point-of-sale terminals are less-capable devices.

In the event where internet connection is unavailable, typically accepting credit cards would also be unavailable. One possibility is keeping a local copy of loyalty scores on the edge server in the facility. If a loyal customer passes the fraud detection AI running on the edge, then the edge controller can allow a temporary bypass allowing the credit card terminal to operate in a “store and forward” manner, storing the transaction locally in a secure manner until the internet connection is restored.

Your software idea as an example here!

Have a software development idea that might benefit from edge computing?

Contact us and let’s discuss what the best solution can be.