Photoshop's Biggest New Feature in Years

The image editing software's new Generative Fill tool, although imperfect, feels revolutionary, capable of creating impressively detailed and convincing imagery in seconds.

I've been using Adobe Photoshop since 2001, when I took a middle school art class on the exciting new field of digital art. The version my class used was Photoshop 5.5, whose big new selling point was "Save for Web." A few years later, in 2005, Adobe added the Spot Healing brush, which, unlike the Clone Stamp tool, didn't require you to sample existing pixels in the image in order to remove skin blemishes, watermarks, and other imperfections. Seeing it for the first time felt like witnessing magic.

In the eighteen years since then, Photoshop has grown and evolved substantially, though for at least the last decade or so, each new version has felt like a mere incremental refinement of what came before. Indeed, for a while it seemed like Adobe was more focused on developing its Creative Cloud infrastructure and locking its users into its subscription-based ecosystem rather than on rolling out truly groundbreaking new features.

Earlier this year, however, Adobe made its biggest splash in years with the introduction of its new artificial intelligence-powered Generative Fill tool, which allows users to add, remove, and expand content using optional natural language prompts.

Use Case: Expanding Cropped Photos

Although the sky is the limit for AI-generated content, perhaps Generative Fill's most immediately useful application is in streamlining traditionally labor-intensive asset creation tasks.

One common situation that I encounter when redesigning a website is that the client has expended a lot of time, effort, or money to create or source beautiful image assets--for the old website.

Usually, they've composed and cropped their photos to fit a certain template or aspect ratio which is incompatible with the new design. Often the images are frustratingly close to being useable, and staging a new photoshoot or otherwise sourcing completely new images seems like a waste of resources when the existing images are so close to what we need.

Sometimes cropping a photo to a new aspect ratio would require cutting out critical content from the composition, and so expanding the image would seem to be the solution. But while cropping an image is relatively simple because it only removes pixels, expanding an image requires creating new pixels which don't already exist--and so previously it hasn't been something software could do on the fly.

Traditionally, expanding a photo would involve a human painting in the new parts of the image, or manually stitching parts of other photos onto the base image to create a seamless collage--a time-consuming and laborious process.

Enter Generative Fill. This Photoshop tool allows you to select any part of an image or canvas, provide an optional text prompt, and fill the selection with AI-generated content. Photoshop generates three options at a time, and usually at least one will be usable.

What's particularly remarkable is how seamlessly Photoshop matches the perspective, exposure, tint, and other aspects of the new image to the original. I've found that when I'm merely trying to expand an image, a text prompt is often not necessary at all, as Photoshop will examine the content of the image and create new, compatible imagery from that alone.

If you do use a text prompt, you will often have to fine-tune it and run the tool a few times to get a suitable result; this is part of the learning curve of every AI tool I've used, learning to tailor your prompts in a way that the AI understands and which reduces unexpected results.

Even with a precise, carefully-worded prompt, Photoshop's AI will sometimes generate puzzling, seemingly random elements in an otherwise appropriate result. As it stands, Photoshop's AI tools can be thought of as a competent, efficient production assistant, or as Adobe puts it, a "co-pilot in creative and design workflows."

Step-by-Step Generative Fill Workflow

Here's how we seamlessly expanded an existing image to fill a taller aspect ratio.

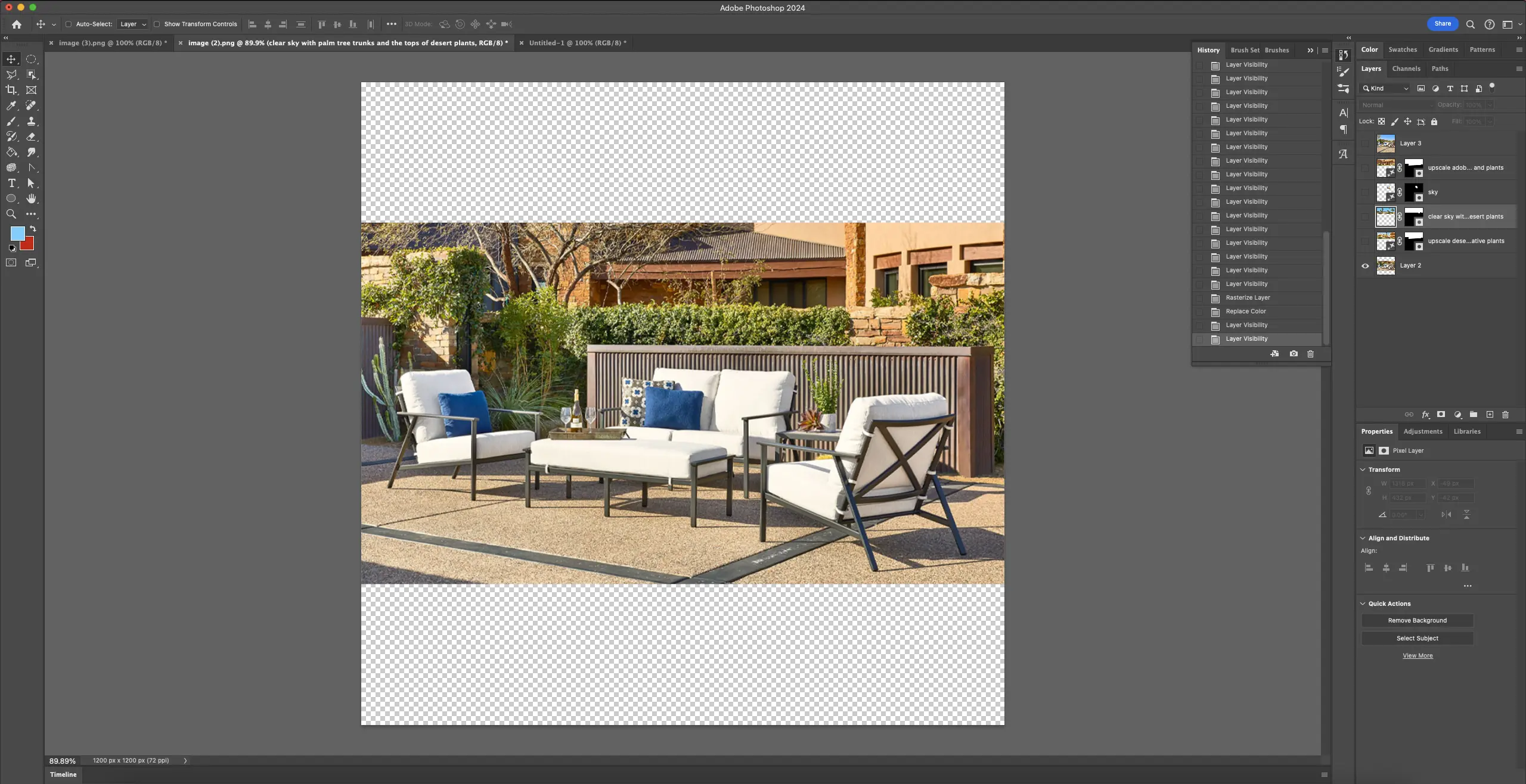

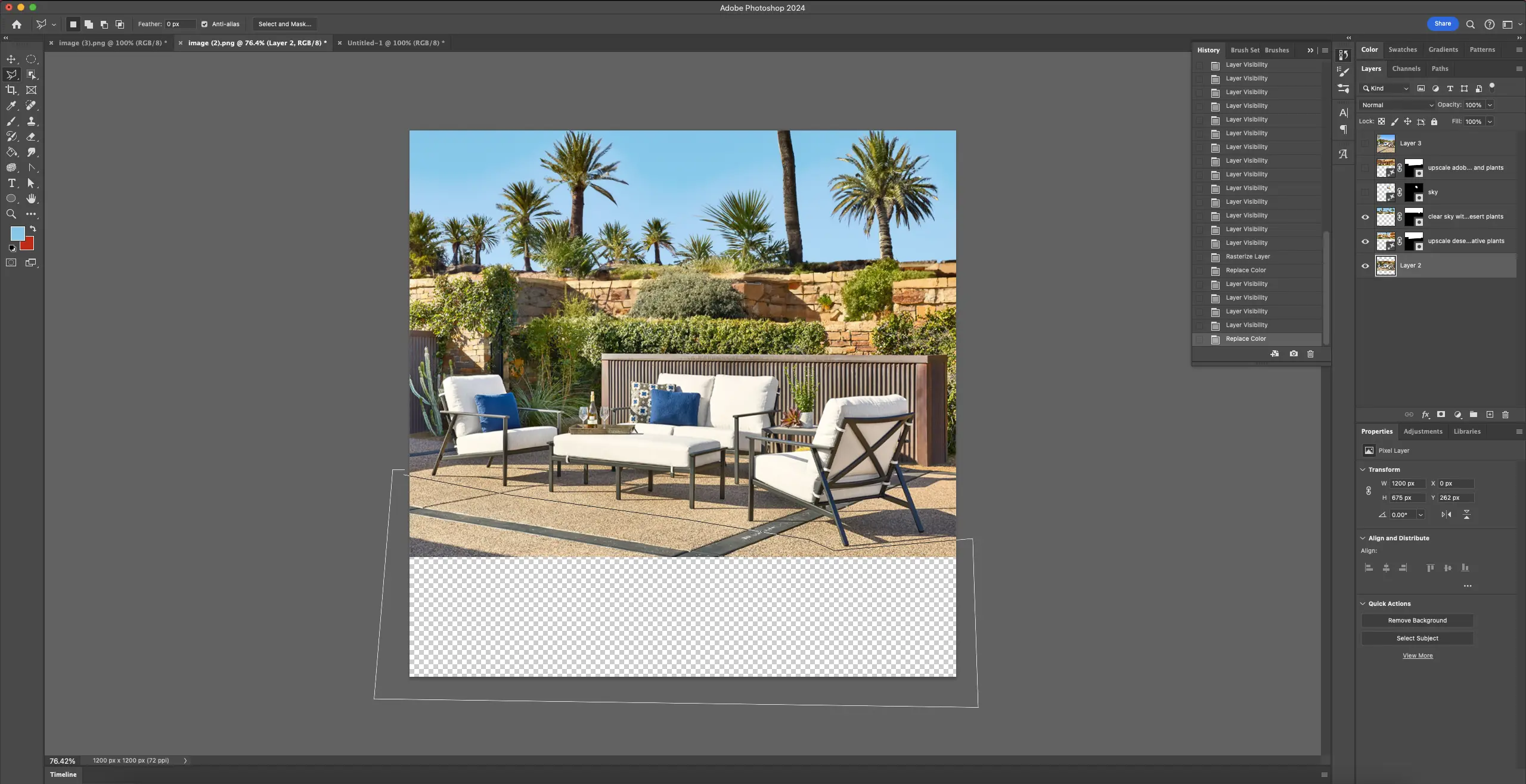

1. First, we take our existing photo and expand the canvas to the desired size. Our new aspect ratio is a bit taller than the old one, which creates some empty space at the top and bottom of our new composition. We've made the canvas even taller than the final crop size. It's always easiest to create more image than we need and then crop at the end.

2. The first new part of the image that we'll tackle is the top portion. In the original photo, we see part of a house, but it is cut off. It is also rather detailed and takes away from the main focus of the photo. The goal for this portion of the image will be to generate a realistic and less busy background which extends to the top of the bigger canvas.

We use the polygonal lasso tool to select the area of the existing photo we want to replace plus the new area of canvas. The polygonal lasso tool allows for a good compromise between the speed of the rectangular marquee and the precision of the lasso tool.

For the prompt, we've entered "upscale desert house with native plants." This is basically a description of the existing background, and while the text prompt is optional, it gives the AI specific elements to include.

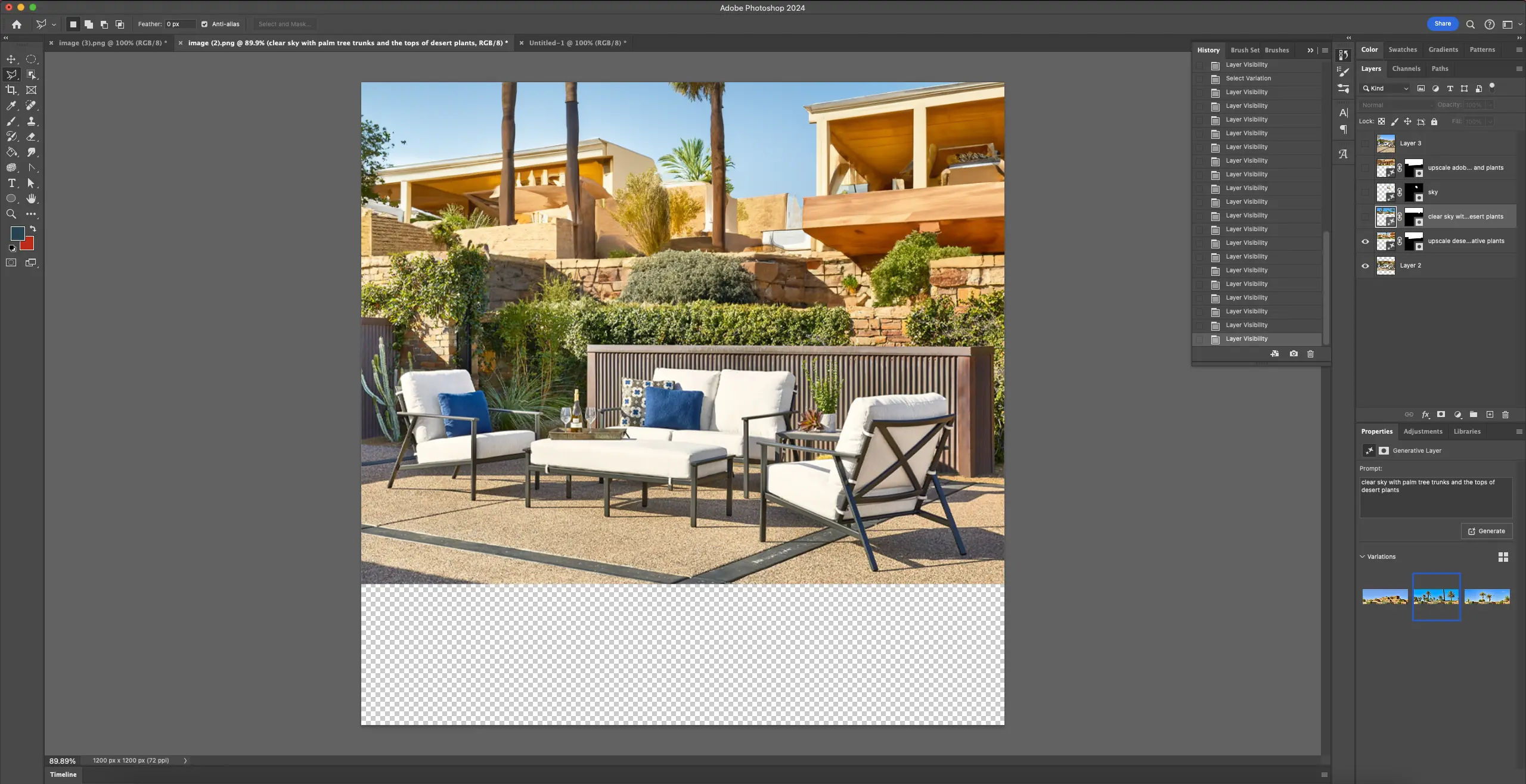

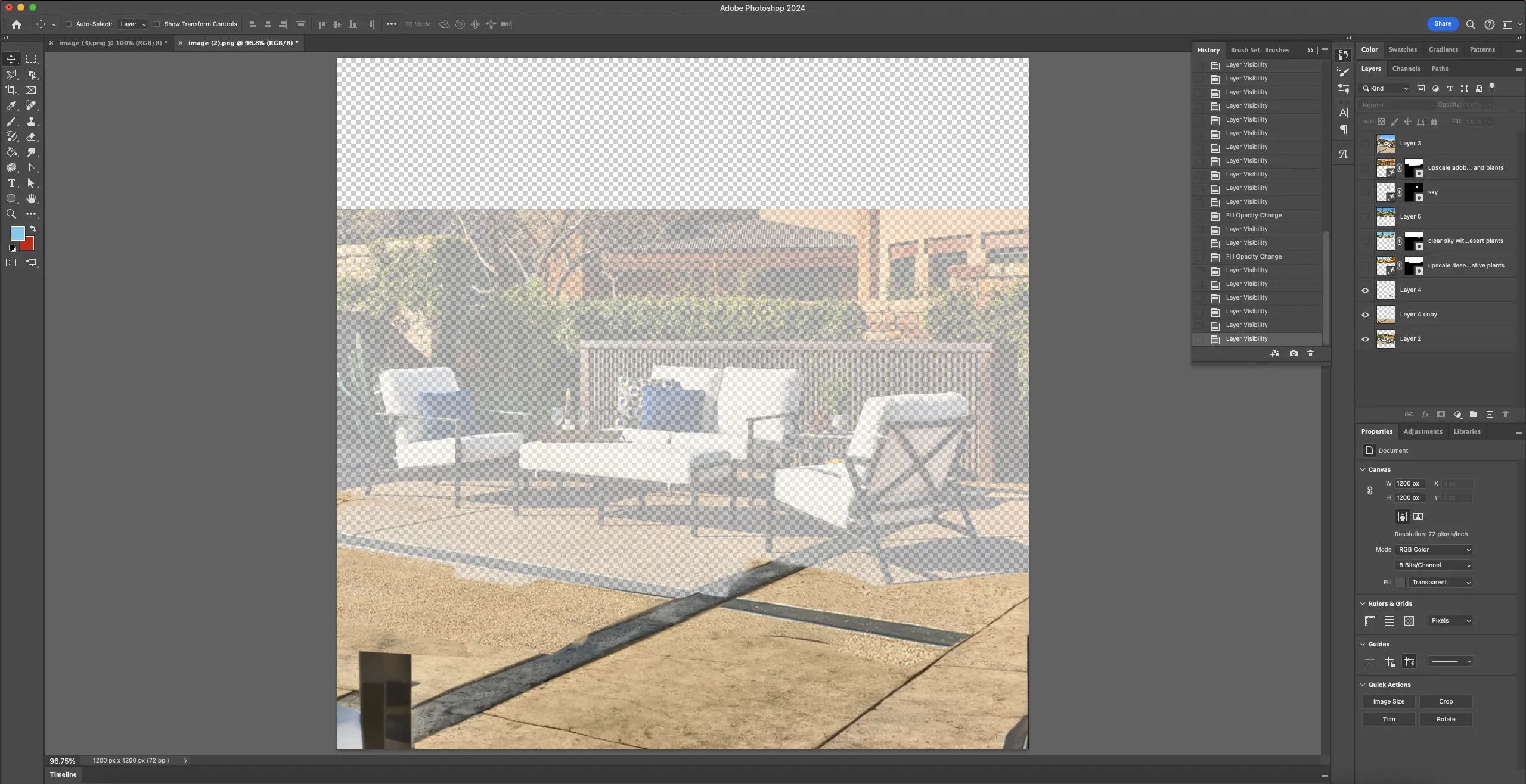

3. The results of our initial prompt look promising. At first glance, the AI seems to have generated some cool-looking contemporary desert architecture, but the more you look at it, the more mistakes you see in the geometry and perspective--odd details that give the image away as AI-generated.

This might be OK for a mockup but we want this image to be realistic and convincing, so we'll continue to fine-tune it. We like the stone wall that the AI has generated, but we'll replace the houses with some more sky and plants. Organic shapes are more convincing since we're not looking for exact symmetry, perfect right angles, regularity of repeating elements, etc.

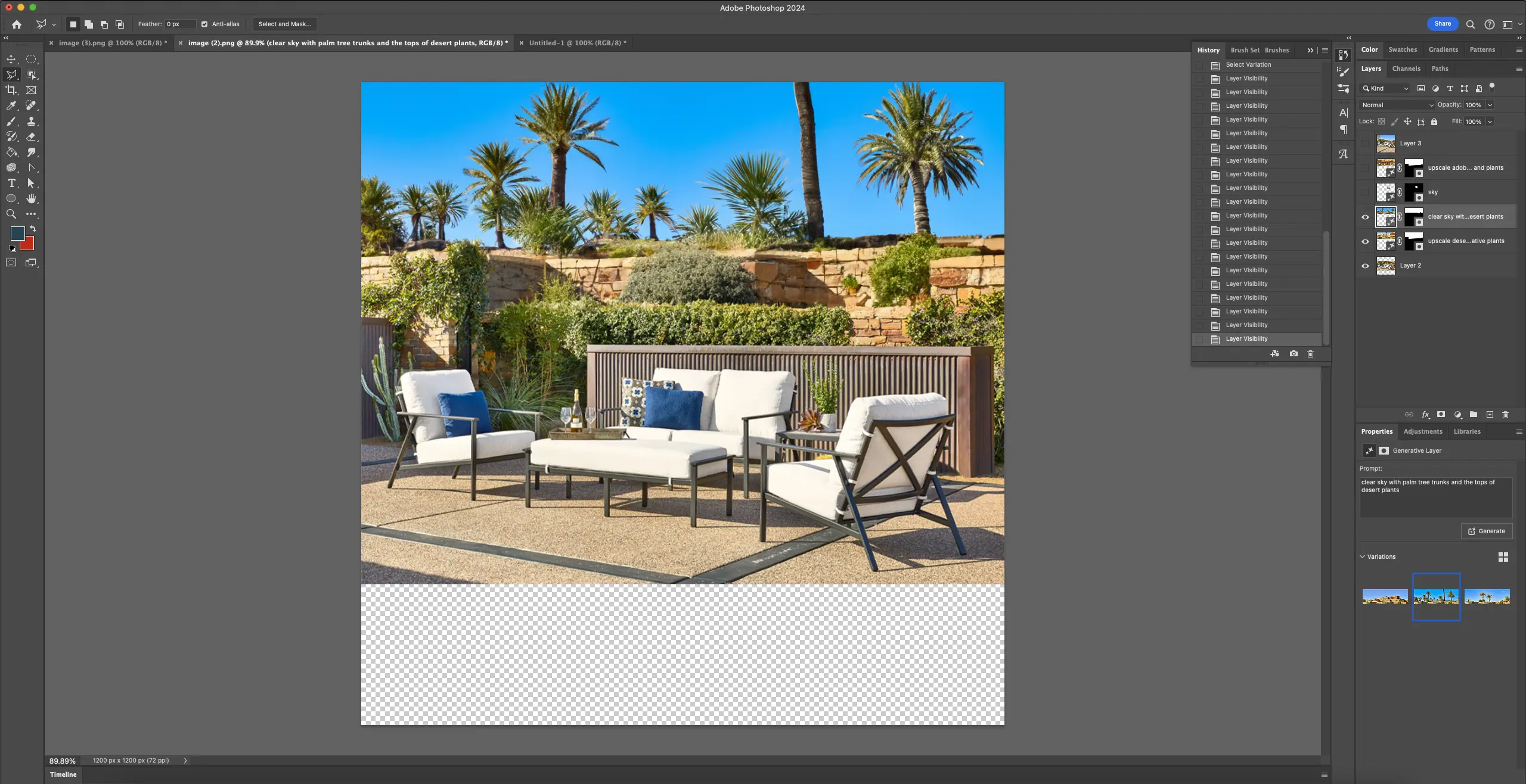

We'll prompt the AI with "clear sky with palm trees and the tops of desert plants." We've included the "tops" part because we're seeing this part of the landscape over a stone wall.

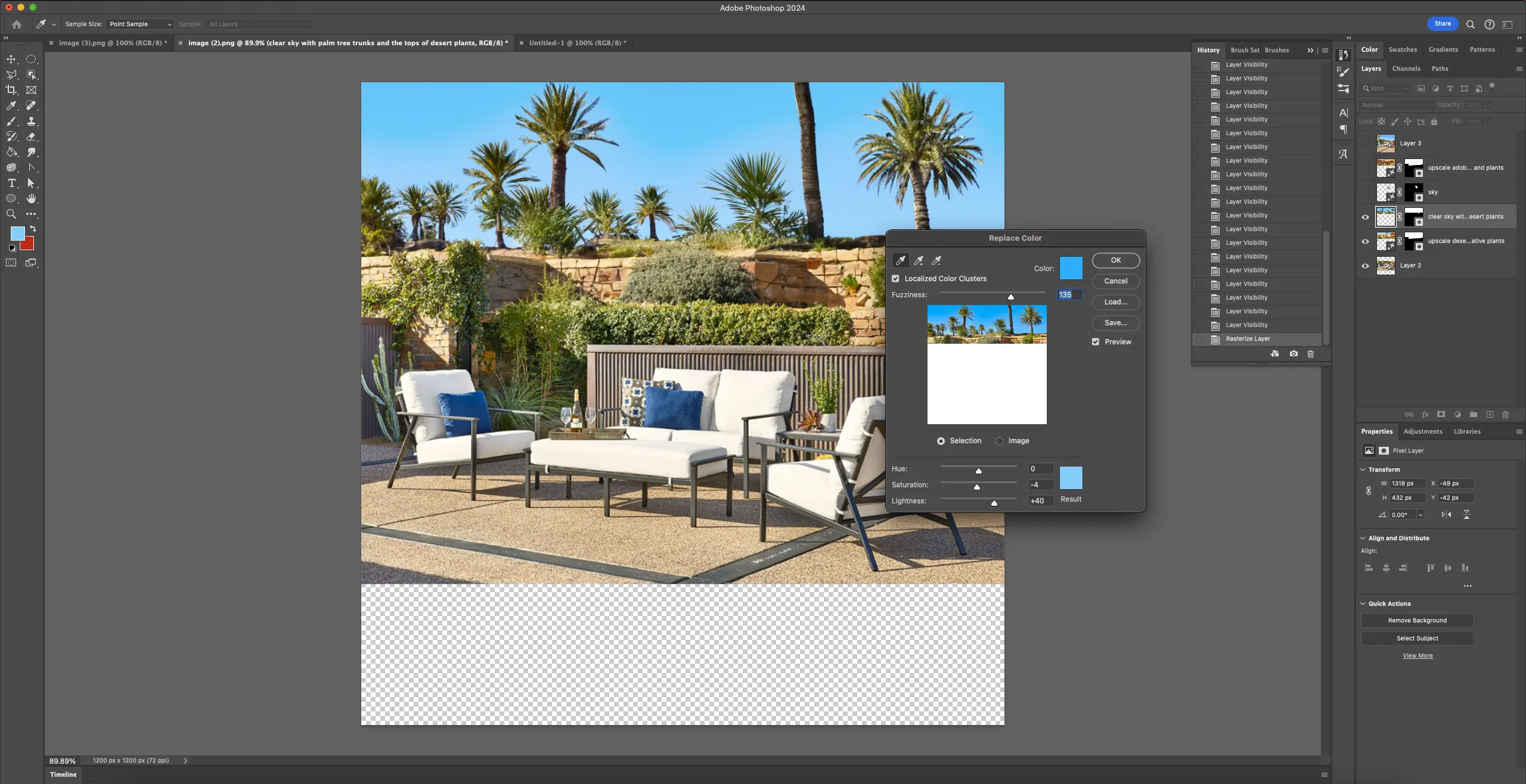

4. The added sky looks great, expect it's too saturated, so we tone that down.

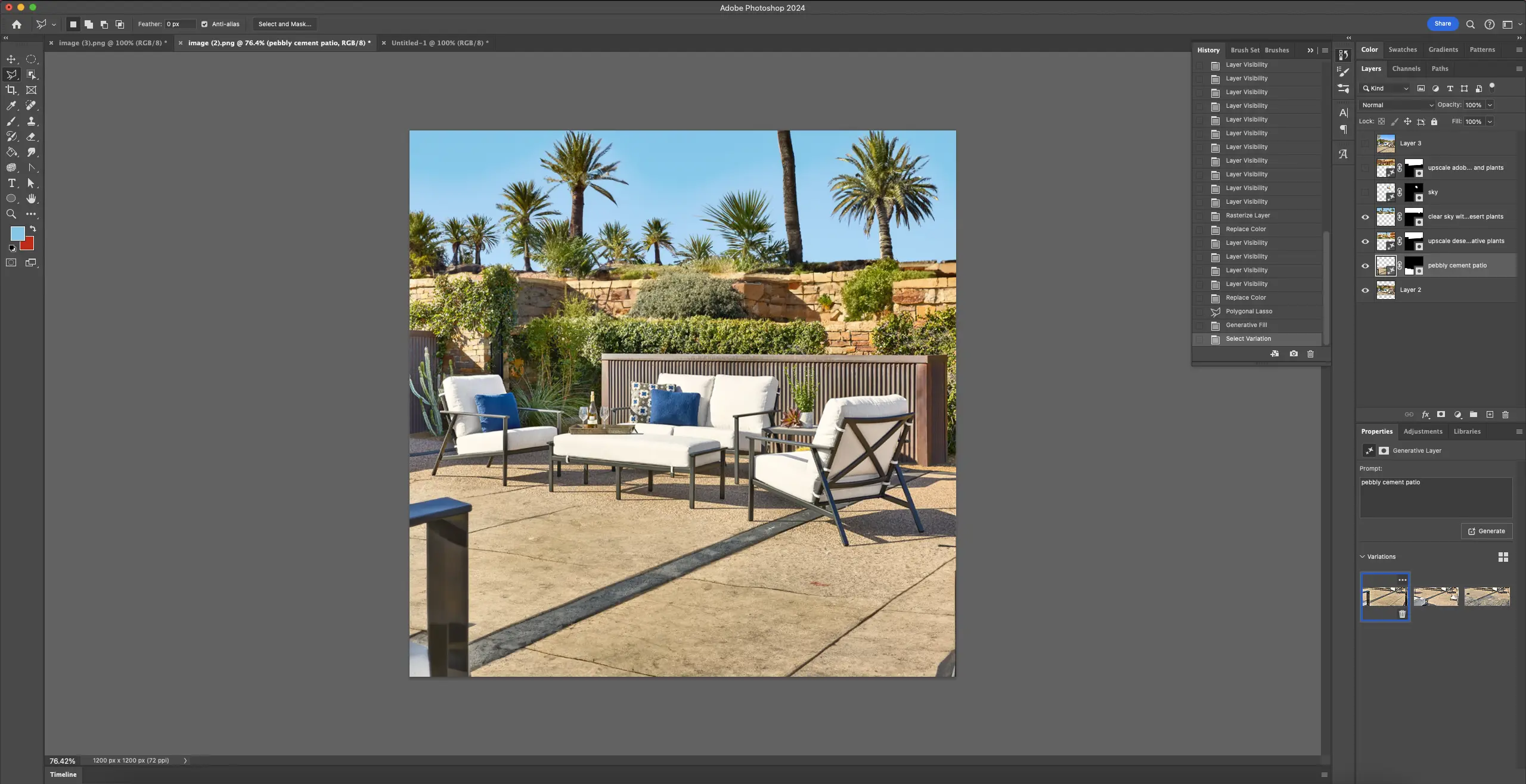

5. The top part looks done, so we'll move on to the bottom portion of the image. Here, we want to extend the paved patio. Once again we select the area we want to fill with the polygonal lasso, and prompt the Generative Fill tool with the text "pebbly cement patio."

6. The results of this Generative Fill look decent, but have a few issues.

For one, there seems to be what looks like the angular arm of a chair or other piece of furniture in one corner. This is unexpected and while it doesn't look entirely out of place--the software seems to have picked up on the style of furniture in the original image--it's certainly distracting.

Additionally, there are some artifacts along the edges of the image, and the texture of the extended patio isn't quite right.

To address these issues, we'll have to go in and manually touch up the new parts of the image, using Generative Fill again to get rid of the random furniture frame, plus some old-fashioned tools like the healing brush to get rid of the artifacts and the clone tool to copy some of the existing patio texture onto the new areas.

Finally, we'll also go in and manually fine-tune the layer mask for the new section of the image so that it seamlessly blends with the existing content.

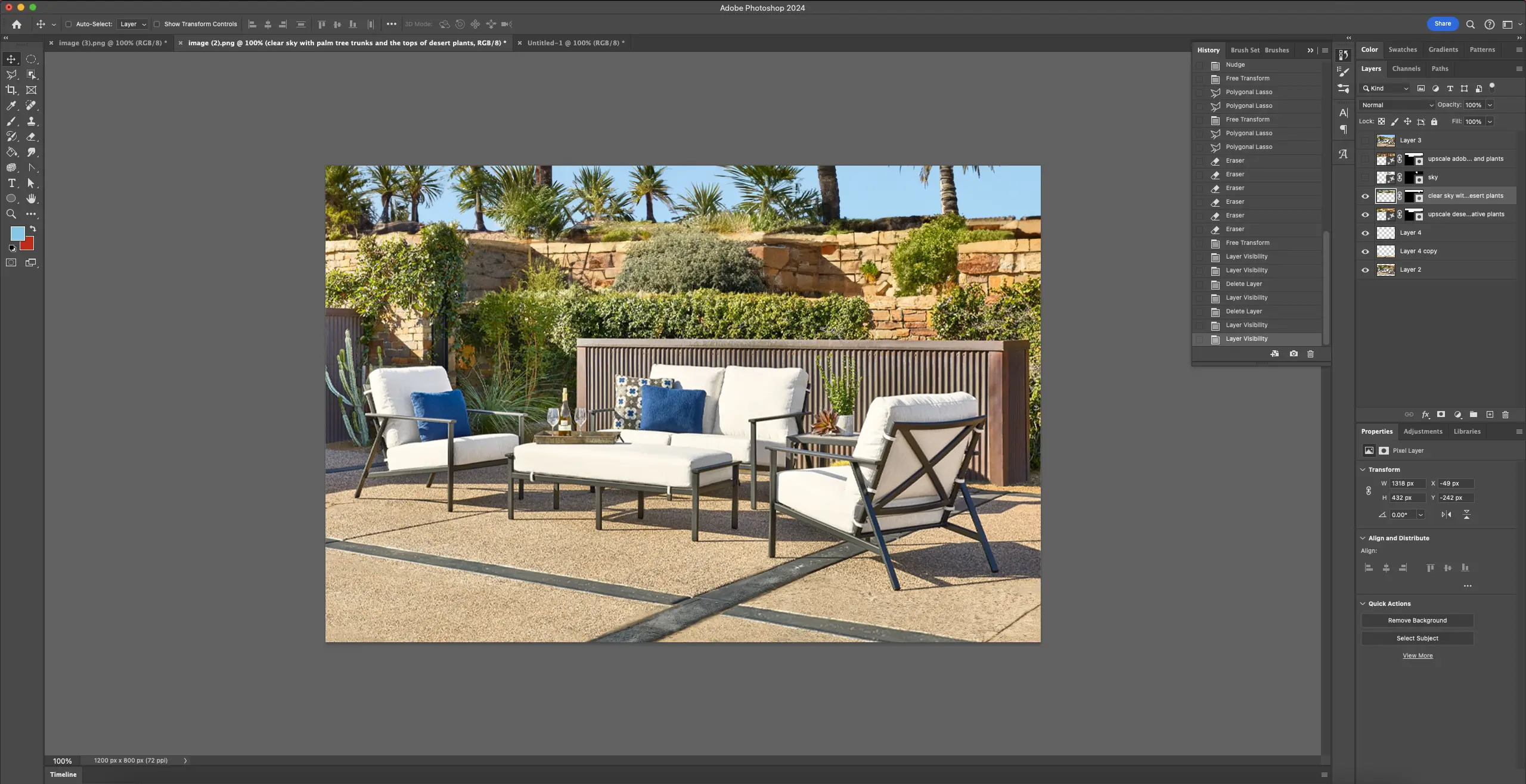

7. Once we're satisfied with the bottom half of the image, we re-enable the layers at the top of the composition and crop the canvas to our desired dimensions to arrive at the final image.

Final Thoughts on Generative Fill and AI Creative Tools (As of 2023)

The new Photoshop feature, like all AI tools, falls short of being the autonomous "co-pilot" that Adobe bills it to be, but its promise is already clear.

While Adobe Photoshop's Generative Fill tool presents a significant leap forward in automating complex image editing tasks, it is not imminently poised to replace human designers entirely.

The tool excels in streamlining traditionally labor-intensive processes, such as expanding cropped photos, by quickly generating realistic and convincing content. However, its imperfections highlight the need for human guidance. Its results almost always require fine-tuning, and writing good AI prompts is a skill in itself.

The step-by-step workflow above illustrates that even with precise prompts, the AI-generated content may lack the nuanced details and accuracy needed for a realistic result.

The necessity for manual touch-ups and adjustments, especially in addressing unexpected artifacts and ensuring seamless integration with existing content, underscores the crucial role of human intervention. Thus, while Generative Fill serves as a competent and efficient production assistant, it falls short of complete autonomy, emphasizing the collaborative relationship between AI tools and human designers in achieving the best results.